Touch UI to Generative Music Sequencer in SuperCollider

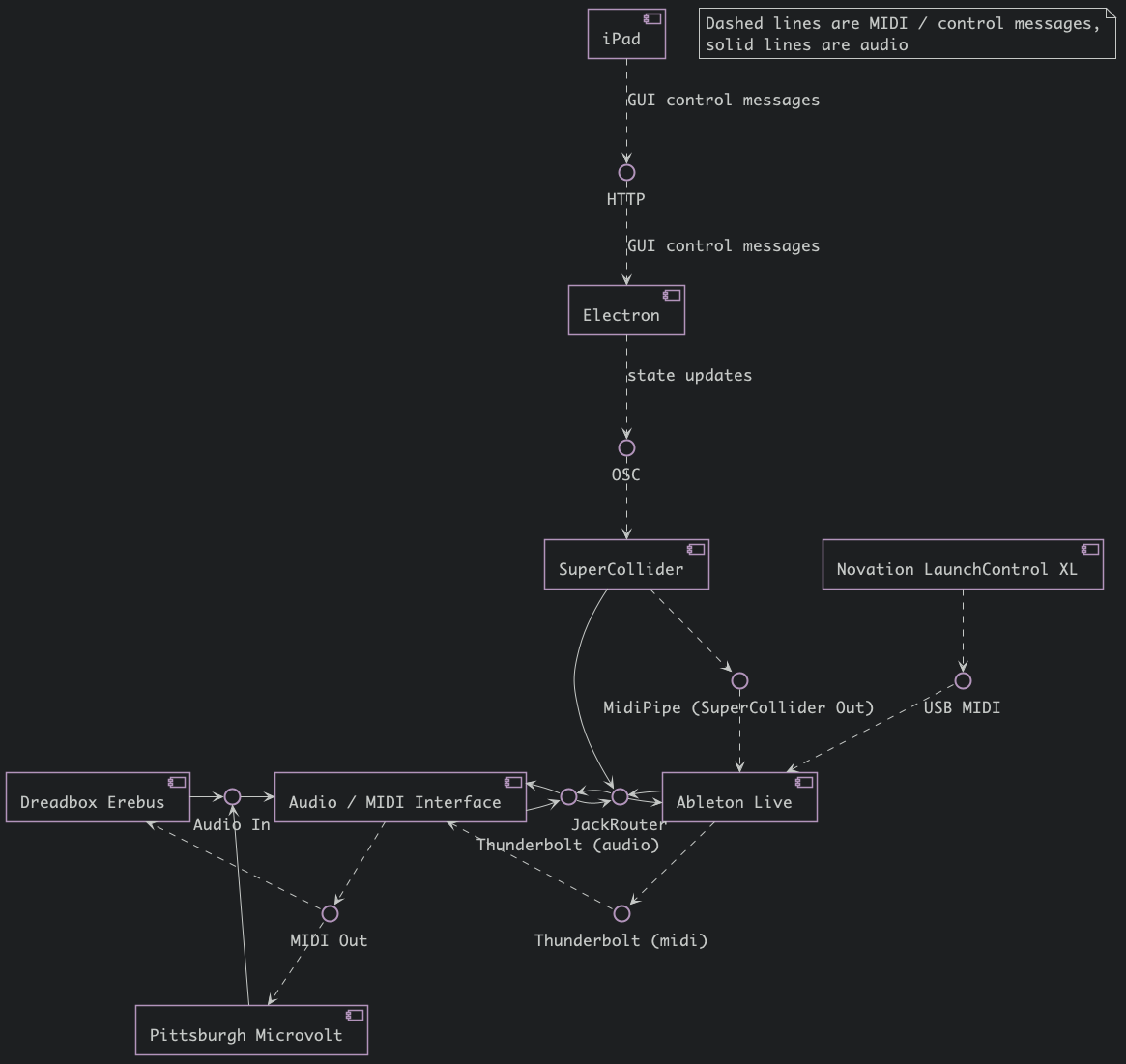

Explorations integrating SuperCollider instruments into a Ableton Live set. A web-based touch-screen UI controls SuperCollider patches through a Node.js server (running inside an Electron app). Tempo clock is synced from SuperCollider to Ableton Live using Ableton Link.

Generative Sequencer Performance Touch UI Demo

Genesis

This work stems from SuperCollider instruments and interactive installation work which contain generative music systems. From this has emerged some JavaScript frameworks for communicating with SuperCollider.

The Interface

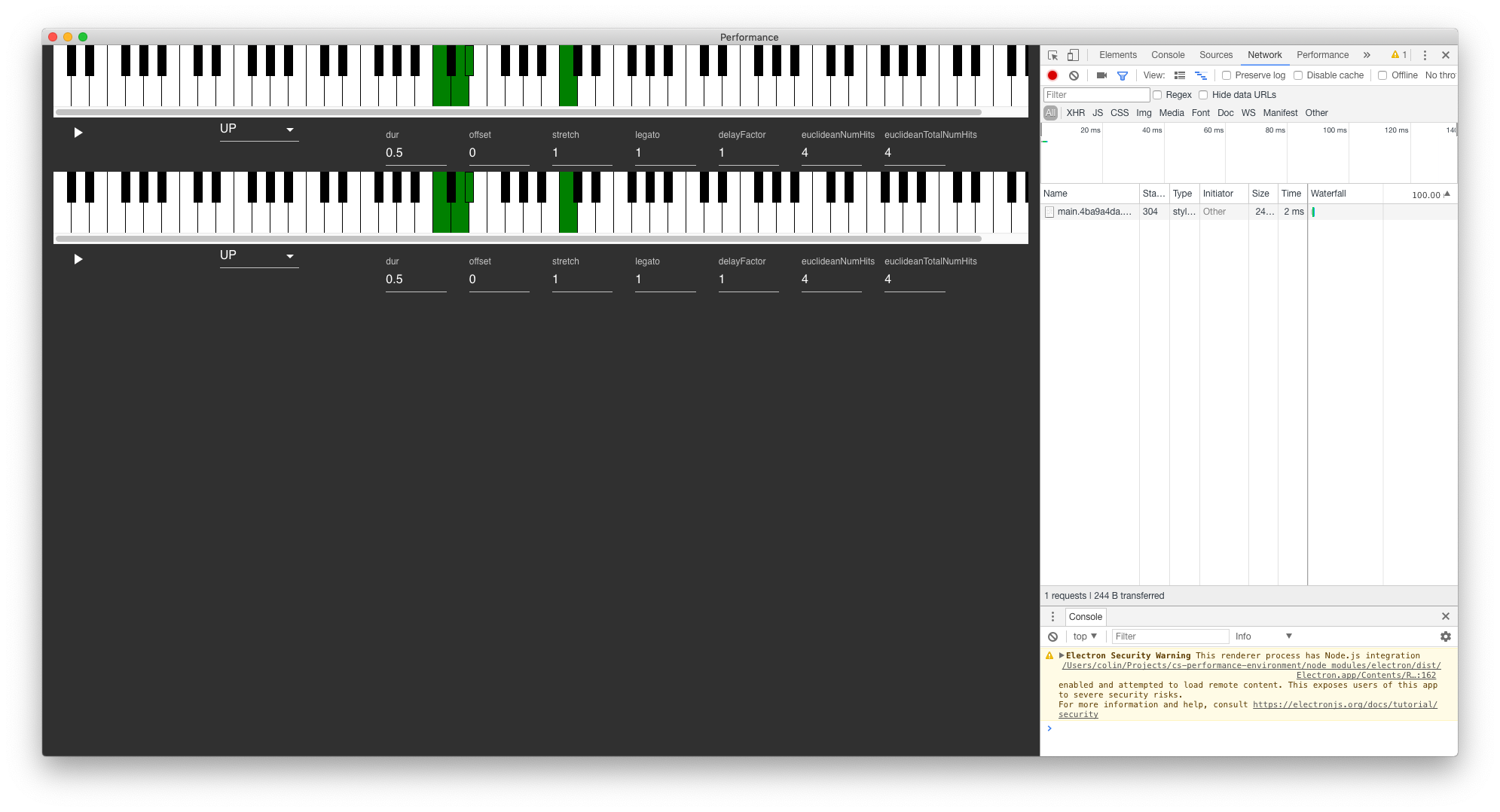

The interface is two-fold, one is rendered in the main window of the Electron app when it opens. This window is meant to be a "debug" interface.

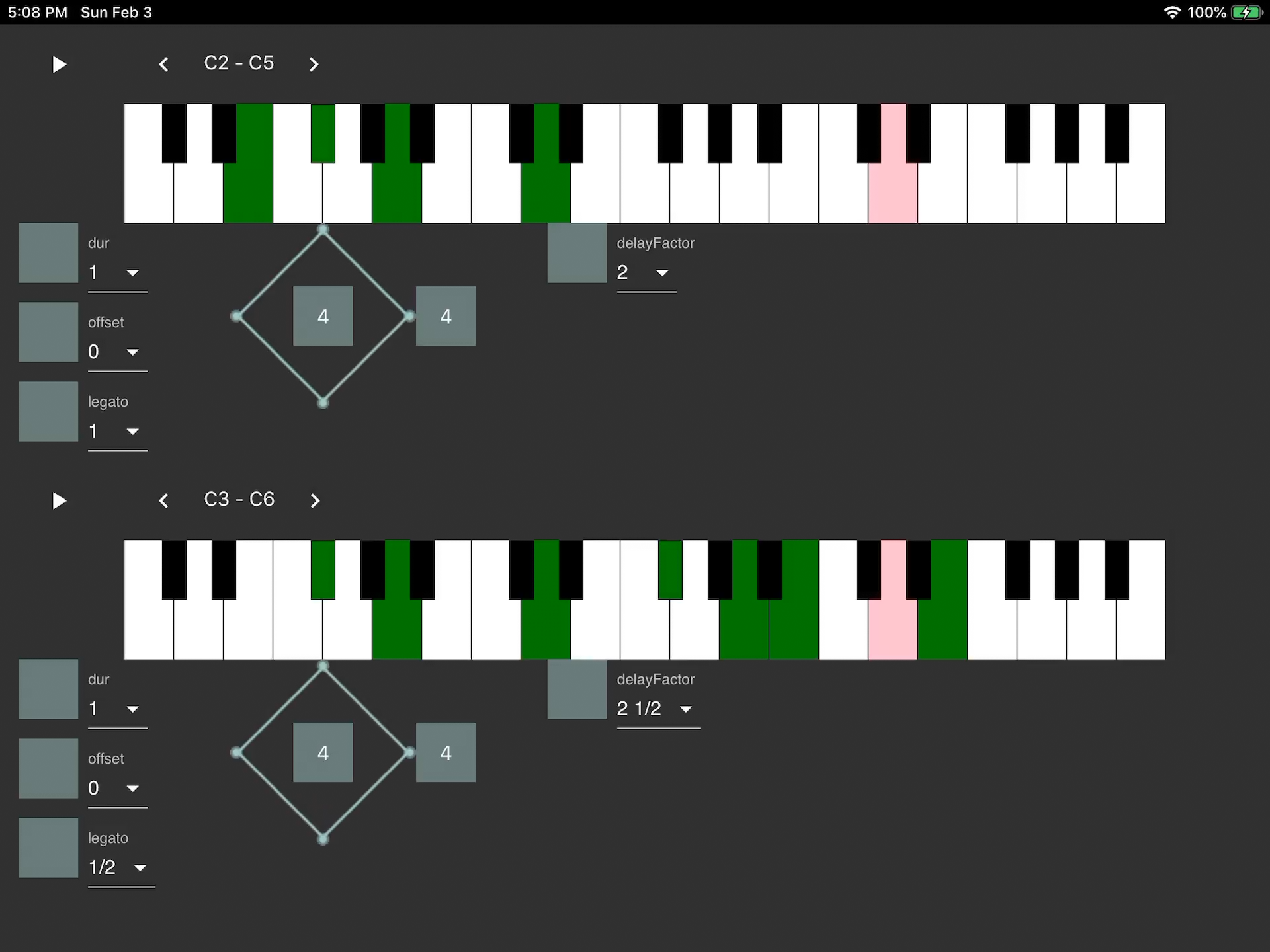

The second is a web-based touch screen interface served by the Electron app as a web page. This is intended to be the main performance interface.

Pan Gesture

On some parameters I am trying a "pan" gesture, where the parameter can be grabbed and "panned" up or down, and additionally a value may be selected directly.

Generative Sequencer Functionality

The sequencer has some straightforward controls such as the duration of each event and the duration of each note within the event duration ("legato").

The notes are played in the same order they are selected, so unlike an arpeggiator, the order of notes played in these sequencers can be modified by removing notes and re-adding them in a different order.

The euclidean control modifies the rhythm of the sequence independently of the notes.

Generative Sequencer Implementation

The state of these parameters are saved in the Node.js process, forwarded down to the SuperCollider "replica" store, then the SuperCollider sequencer makes use of them to render the actual stream of notes.

To see an example, check out the SynkopaterOutboardSequencer SuperCollider class that uses the Bjorklund2 class from the Bjorklund SuperCollider Quark.

On the React / Redux side, check out the EuclideanTouchControl React component that uses a Canvas and the PIXI.js library in the EuclideanVisualizerRenderer component to render the Euclidean diagram (with the help of a bjorklund-js npm module).

Routing Setup

Currently I am routing everything through Ableton Live and playing with two generative sequencers each feeding an analog synth with a separate delay line. The length of the delay is always a factor of the sequencer's event length.

Demo

Here is a demo. Enjoy and please feel free to get in touch if you'd like to chat generative music, SuperCollider, JavaScript, or any of their intersection.

Generative Sequencer Performance Touch UI Demo